The throughput benchmark test of evpp against Boost.Asio

Boost.Asio is a cross-platform C++ library for network and low-level I/O programming that provides developers with a consistent asynchronous model using a modern C++ approach.

- evpp-v0.2.4 based on libevent-2.0.21

- asio-1.10.8

- Linux CentOS 6.2, 2.6.32-220.7.1.el6.x86_64

- Intel(R) Xeon(R) CPU E5-2630 v2 @ 2.60GHz

- gcc version 4.8.2 20140120 (Red Hat 4.8.2-15) (GCC)

We use the test method described at http://think-async.com/Asio/LinuxPerformanceImprovements using ping-pong protocol to do the throughput benchmark.

Simply to explains that the ping pong protocol is the client and the server both implements the echo protocol. When the TCP connection is established, the client sends some data to the server, the server echoes the data, and then the client echoes to the server again and again. The data will be the same as the table tennis in the client and the server back and forth between the transfer until one side disconnects. This is a common way to test throughput.

The test code of evpp is at the source code benchmark/throughput/evpp, and at here https://github.com/Qihoo360/evpp/tree/master/benchmark/throughput/evpp. We use tools/benchmark-build.sh to compile it. The test script are single_thread.sh and multiple_thread.sh.

The test code of asio is at https://github.com/huyuguang/asio_benchmark using commits 21fc1357d59644400e72a164627c1be5327fbe3d and the client2.cpp/server2.cpp test code. The test script is single_thread.sh and multiple_thread.sh.

We have done two benchmarks:

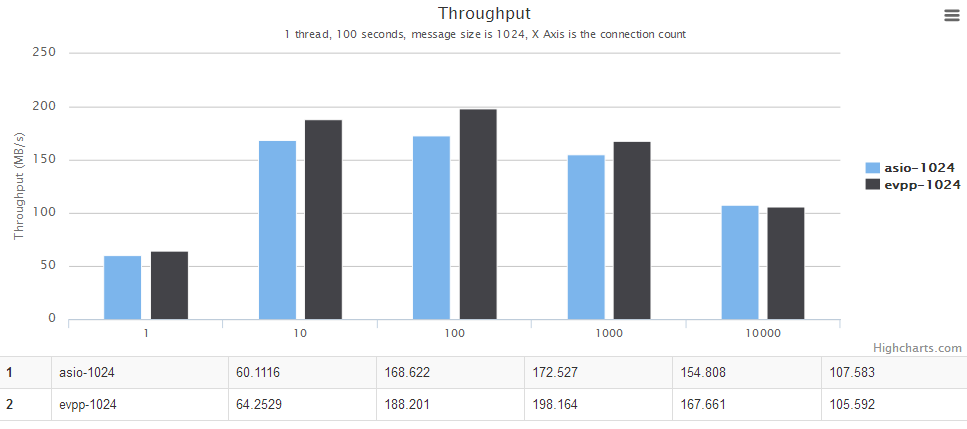

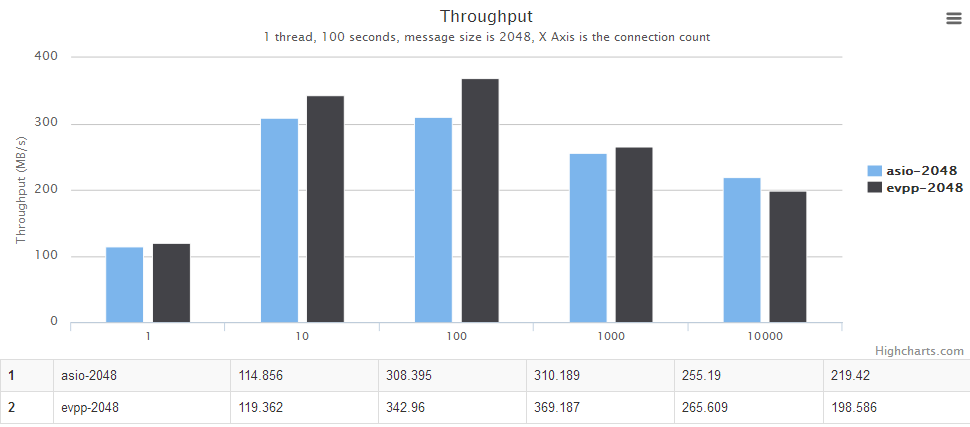

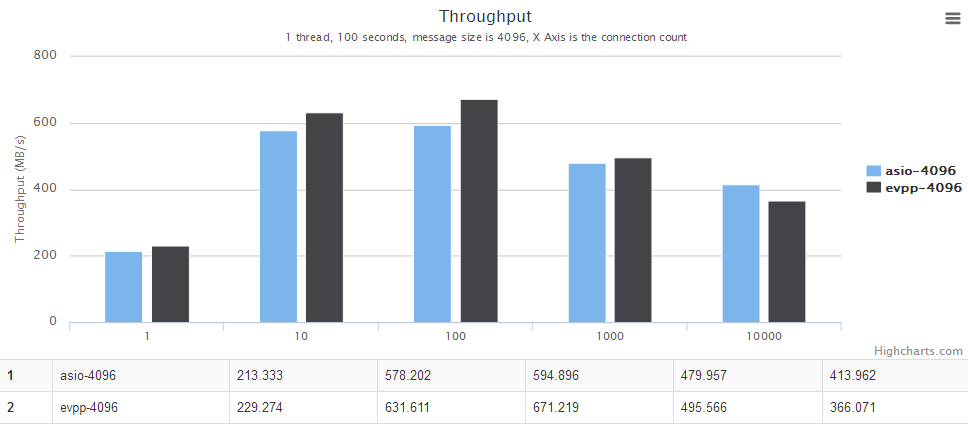

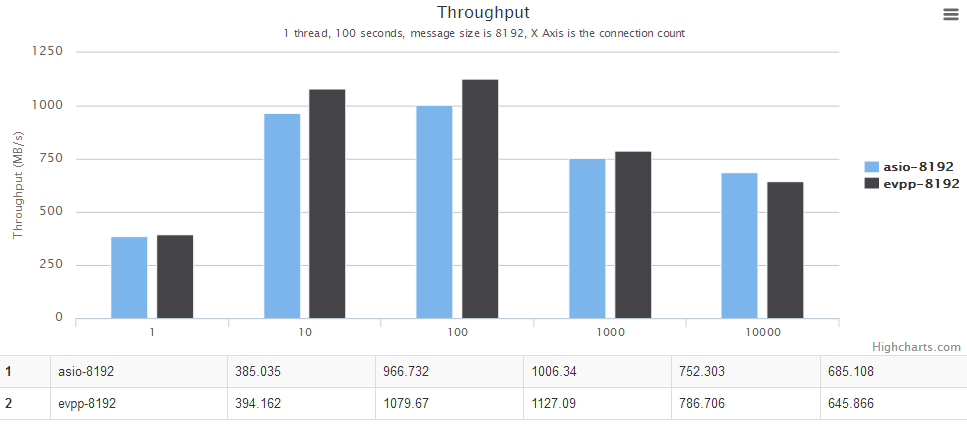

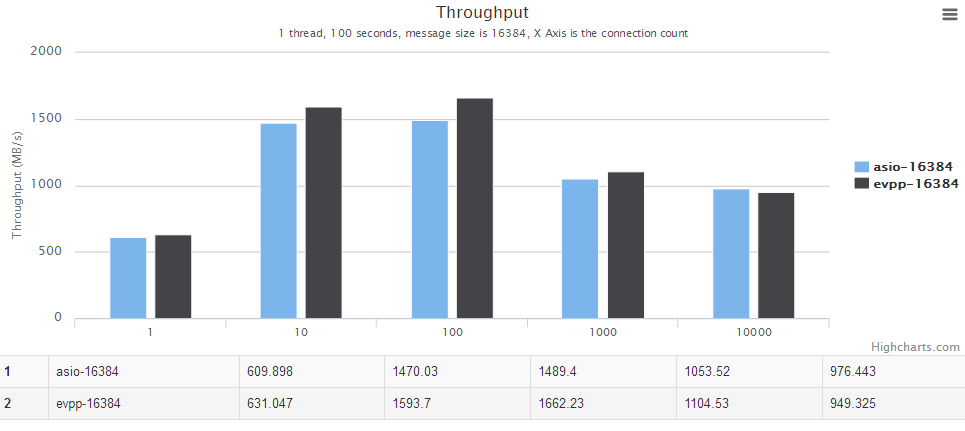

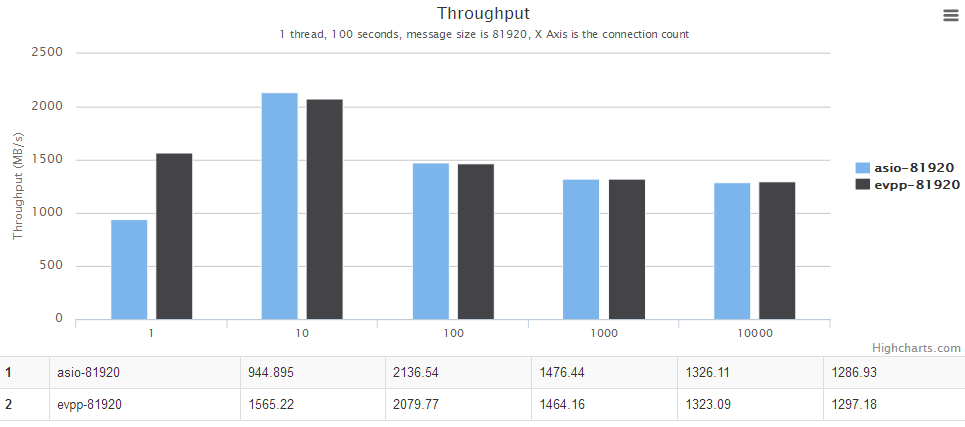

- Single thread : When the number of concurrent connections is 1/10/100/1000/10000, the message size is 1024/2048/4096/8192/16384/81920.

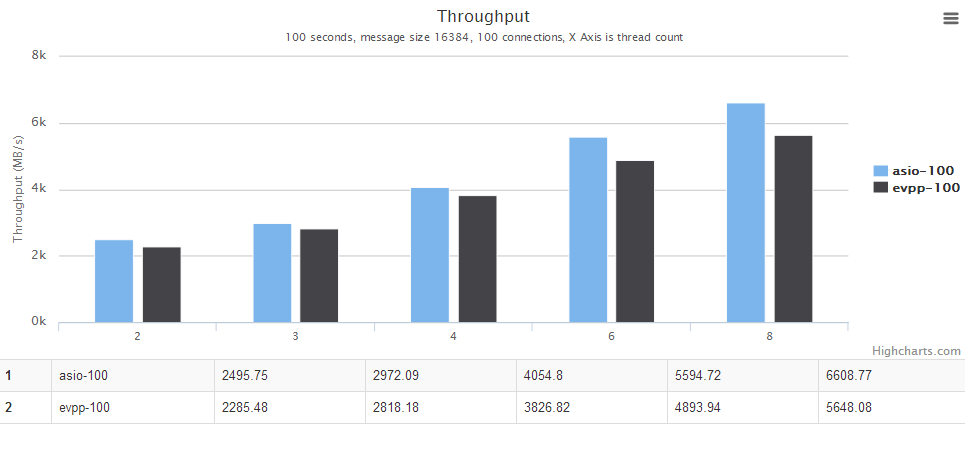

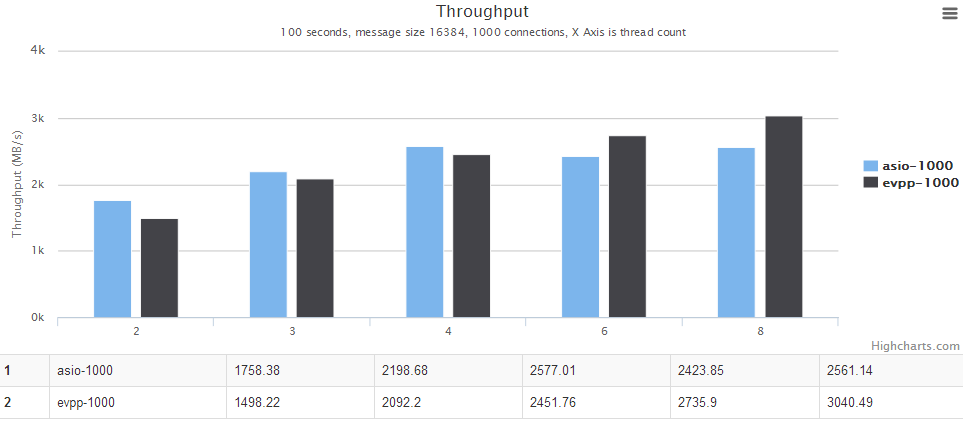

- Multi-threaded : When the number of concurrent connections is 100 or 1000, the number of threads in the server and the client is set to 2/3/4/6/8, the message size is 16384 bytes

- When the number of concurrent connections is 10,000 or more in the test, asio is better, the average is higher than evpp 5%~10%

- When the number of concurrent connections is 1,10,100,1000 in the test, evpp's performance is better, the average is higher than asio 10%~20%

For details, see the chart below, the horizontal axis is the number of concurrent connections. The vertical axis is the throughput, the bigger the better.

- When the number of concurrent connections is 1000, evpp and asio have a similar performance and have their own advantages.

- When the number of concurrent connections is 100, asio is better performing to 10% in this case.

For details, see the chart below. The horizontal axis is the number of threads. The vertical axis is the throughput, the bigger the better.

In this ping pong benchmark test, the asio's test code is using a fixed-size buffer to receive and send the data. That can take advantage of asio Proactor model, he has almost no memory allocation. Each time asio only reads fixed size of data and then sent it out, and then use the same BUFFER to do the next read operation.

In the same time evpp is a network library of Reactor model, the receiving data is probably not a fixed size, which involves evpp::Buffer internal memory reallocation problems that lead to excessive memory allocation.

We will do another benchmark test to verify the analysis. Please look forward to it.

The IO Event performance benchmark against Boost.Asio : evpp is higher than asio about 20%~50% in this case

The ping-pong benchmark against Boost.Asio : evpp is higher than asio about 5%~20% in this case

The throughput benchmark against libevent2 : evpp is higher than libevent about 17%~130% in this case

The performance benchmark of queue with std::mutex against boost::lockfree::queue and moodycamel::ConcurrentQueue : moodycamel::ConcurrentQueue is the best, the average is higher than boost::lockfree::queue about 25%~100% and higher than queue with std::mutex about 100%~500%

The throughput benchmark against Boost.Asio : evpp and asio have the similar performance in this case

The throughput benchmark against Boost.Asio(中文) : evpp and asio have the similar performance in this case

The throughput benchmark against muduo(中文) : evpp and muduo have the similar performance in this case

The beautiful chart is rendered by gochart. Thanks for your reading this report. Please feel free to discuss with us for the benchmark test.