A lightweight implementation of Embarrassingly Easy Fully Non-Autoregressive Zero-Shot TTS model using MLX, with minimal dependencies and efficient computation on Apple Silicon.

# Quick install (note: PyPI version may not always be up to date)

pip install e2tts-mlx

# For the latest version, you can install directly from the repository:

# git clone https://github.com/JosefAlbers/e2tts-mlx.git

# cd e2tts-mlx

# pip install -e .To use a pre-trained model for text-to-speech:

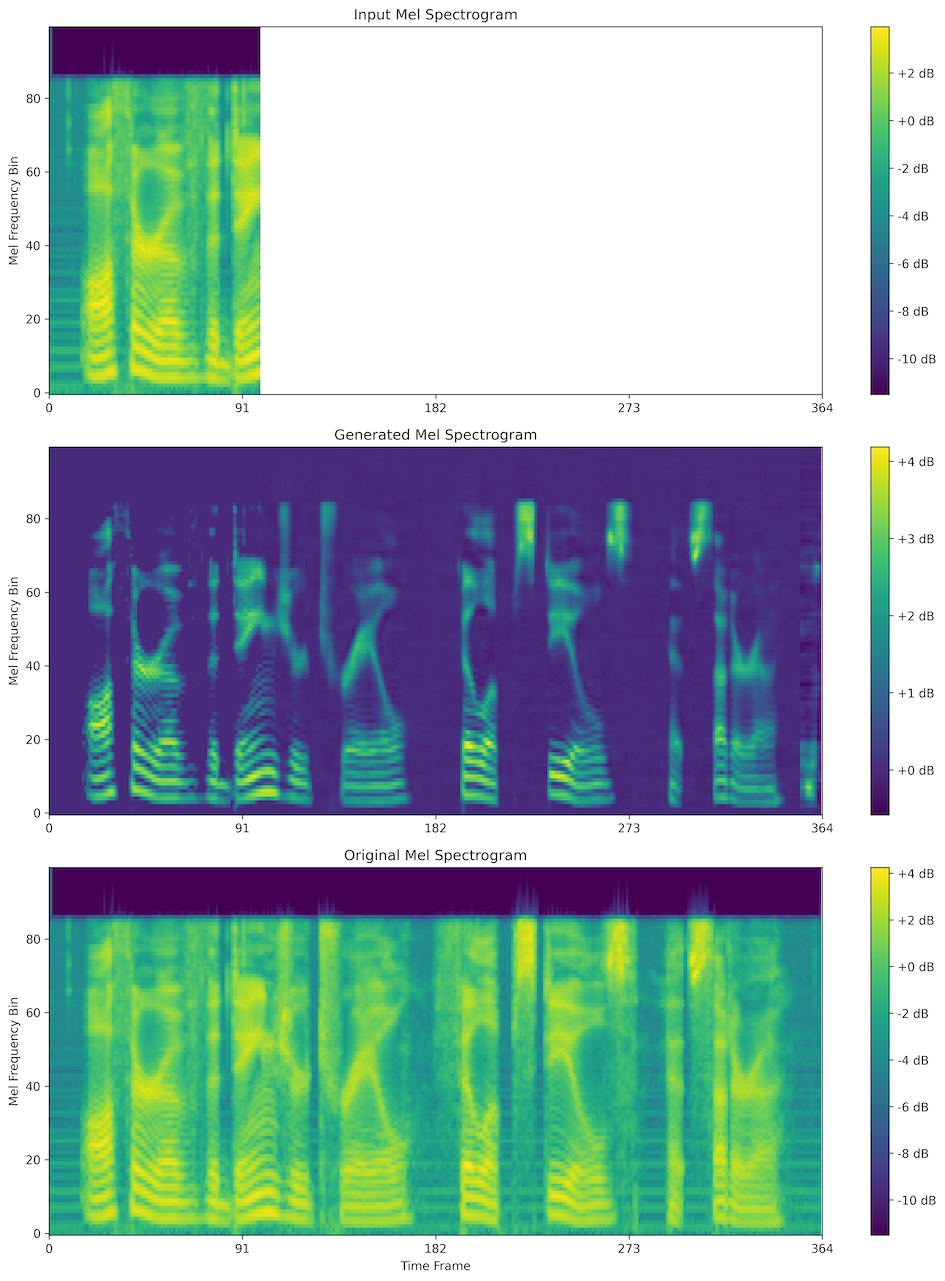

e2tts 'We must achieve our own salvation.'This will write tts_0.wav to the current directory, which you can then play.

tts_0.mp4

To train a new model with default settings:

e2ttsTo train with custom options:

e2tts --batch_size=16 --n_epoch=100 --lr=1e-4 --depth=8 --n_ode=32Select training options:

--batch_size: Set the batch size (default: 32)--n_epoch: Set the number of epochs (default: 10)--lr: Set the learning rate (default: 2e-4)--depth: Set the model depth (default: 8)--n_ode: Set the number of steps for sampling (default: 1)--more_dsparameter: Implements two-set training (default: 'JosefAlbers/lj-speech')

Special thanks to lucidrains' fantastic code that inspired this project, and to lucasnewman's the Vocos implementation that made this possible.

Apache License 2.0